Meta Replaces Fact-Checkers with User-Driven Community Notes, Drawing Criticism

By Ethan Eng

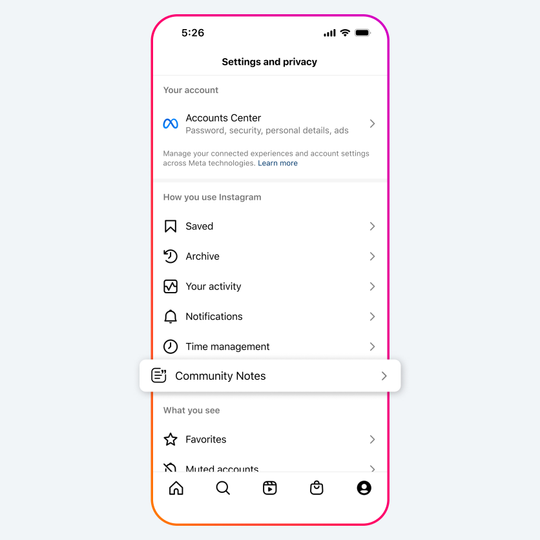

Meta, the owner of Facebook and Instagram, announced on January 7th the replacement of third-party fact-checkers with a community-driven notes system.

The new change in their policy mirrors that of X, formerly Twitter, and its shift towards relying on user-based notes on posts. The notes left by the users theoretically add more context to the potentially misleading post.

Southern Poverty Law Center’s deputy director for data analytics and open-source intelligence, Megan Squire, says, “I'm hopeful, but I'm also concerned that this new structure that all the Meta properties are embarking on, it's just not going to end well.”

Meta’s Transparency Center reveals that users would need to be 18, have an account of 6 months, have a verified phone number or two-factor authorization, and be in “good standing” for eligibility to participate in this system.

"There's going to be a rise in all kinds of disinformation, misinformation, from health to hate speech and everything in between." Squire comments.

Visibility for these notes depends on an agreement from other Community Note users that lie on different political spectrums. An agreement from differing ideological users is needed for the system to attach the note to the post.

Alex Mahadevan of MediaWise, a social media literacy program, comments that “maybe that would have worked four years ago” but “that does not work anymore because 100 people on the left and 100 people on the right are not going to agree that vaccines are effective.”

In spite of these limitations, research produced by Twitter suggests that with these cross-ideological agreements on notes, once made publicly attached to the post, users were “significantly less likely to reshare social media posts than those who did not see the annotations,” Twitter reports.

However, “most notes on posts aren’t made public,” Mahadevan says.

“So this algorithm that was supposed to solve the problem of biased fact-checkers basically means there is no fact-checking,” he adds.

Mark Zuckerburg explains that the change in policy was due to third-party fact checkers being “too politically biased” and having “destroyed more trust than they’ve created.”

Maria Ressa, a co-founder of the Rappler news site and Nobel peace prize winner, in response Zuckerburg’s claim says that “Journalists have a set of standards and ethics” and “what Facebook is going to do is get rid of that and then allow lies, anger, fear and hate to infect every single person on the platform.”

Professor of Health Communication at Harvard T.H. Chan School of Public Health K. Vish Viswanath says that “People are particularly hungry for information during times of crisis, and that provides an opportune time for misinformation to spread.”

“Misinformation can exacerbate health disparities within certain marginalized communities,” Viswanath explains. “For example, when anti-Asian racism spiked during COVID-19… on the societal level, misinformation can distort public opinion and potentially further erode trust in institutions.”

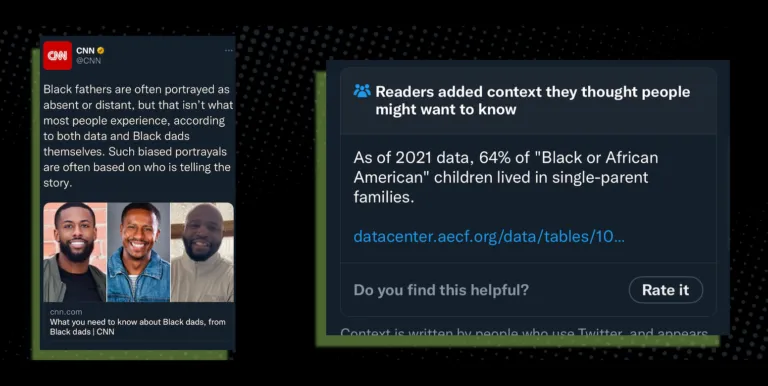

Mahadevan points to a community note being attached to a CNN Twitter tweet stating that “Black fathers are often portrayed as absent or distant, but that isn’t what most people experience, according to both data and Black dads themselves. Such biased portrayals are often based on who is telling the story.”

The Community Note made by the users attached to the post claimed, “As of 2021 data, 64% of ‘Black or African American’ children lived in single-parent families,” and cited outdated data.

“Millions of people saw this,” Mahadevan says. “It was a racist note based on faulty data.”

The note was up for several days before being taken down when users downvoted it.

To combat misinformation, Viswanath says that “deplatforming accounts that are sources of misinformation,” increasing “the supply of accurate information… through science journalism,” and “supporting people as they seek out answers to pressing questions.”

He explains that when people don’t have access to accurate information, they are more receptive to misinformation.

Meta’s announcement about the change in their policy continues to be debated for the implications it holds for misinformation as they begin to roll out their new system in March.